MLX Inference Benchmark: 4 Frameworks on Apple M5 Max with 35B LLM

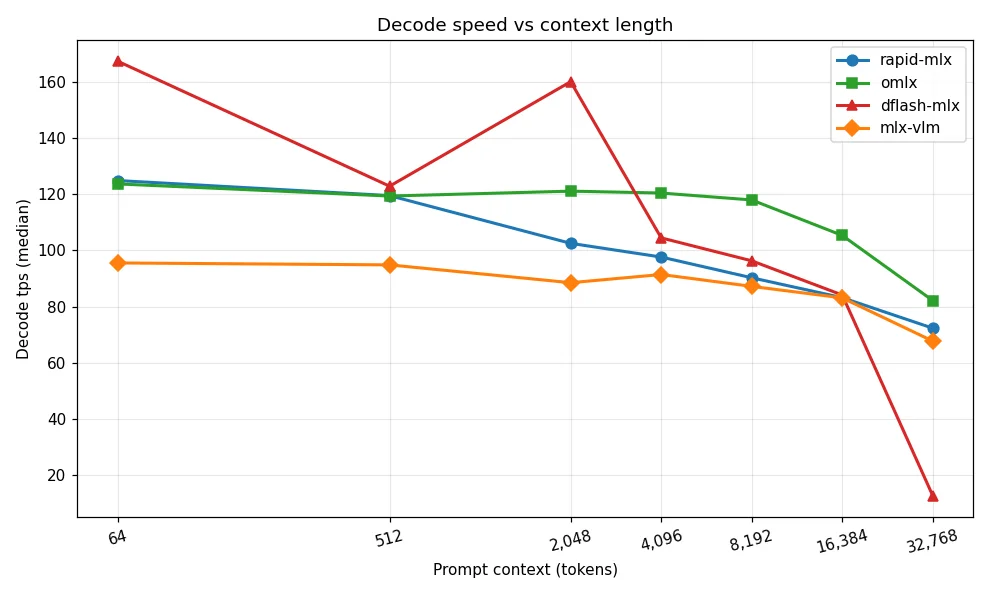

Real benchmark of four MLX inference frameworks (rapid-mlx, omlx, dflash-mlx, mlx-vlm) on Apple M5 Max with 64 GB unified memory using a 35B quantized MoE model across seven context lengths (64 to 32K tokens). Decode speed, TTFT, stability, and enterprise on-premise AI selection guide. Source data from ywchiu/mlx_benchmark_lab.

閱讀更多